From Keywords to Conversations

For decades, search operated on a simple premise: users entered 2 or 3 keywords, brands competed for page-one placement, and success meant capturing traffic volume. LLMs are dismantling that model entirely. When users ask ChatGPT or Claude full, context-rich questions— “I have arthritis, allergies, and a sensitive stomach. What works?”—they’re revealing needs, constraints, and emotional drivers that never surfaced in a keyword-driven world. Intent is no longer fragmented across dozens of pageviews; it’s concentrated in a single conversation. For brands, this represents a fundamental shift: you’re no longer optimizing for search algorithms. You’re designing for depth.

In healthcare and pharma, this shift is seismic. Patients are already using LLMs to ask nuanced medical questions (“I’m pregnant, on metformin, and my blood sugar is unstable. What does this mean?”), revealing medical history, lifestyle factors, and emotional concerns that traditional search never captured. Pharma and healthcare brands must now design messaging and product information that reads intelligently to both humans and AI agents evaluating claims, efficacy, and safety. Patient acquisition strategies built on keyword volume are becoming obsolete. Brands that embrace intent depth will win trust and conversion.

What Might Be Around the Corner?

Expect AI agents to become the primary readers of medical and product data—and regulatory bodies to demand new standards for how clinical claims are presented to both humans and machines. Brands will shift from static websites to continuously evolving conversational experiences that learn from user behavior and adapt messaging in real time. The distinction between “marketing content” and “product information” will blur; everything will be conversational and contextual.

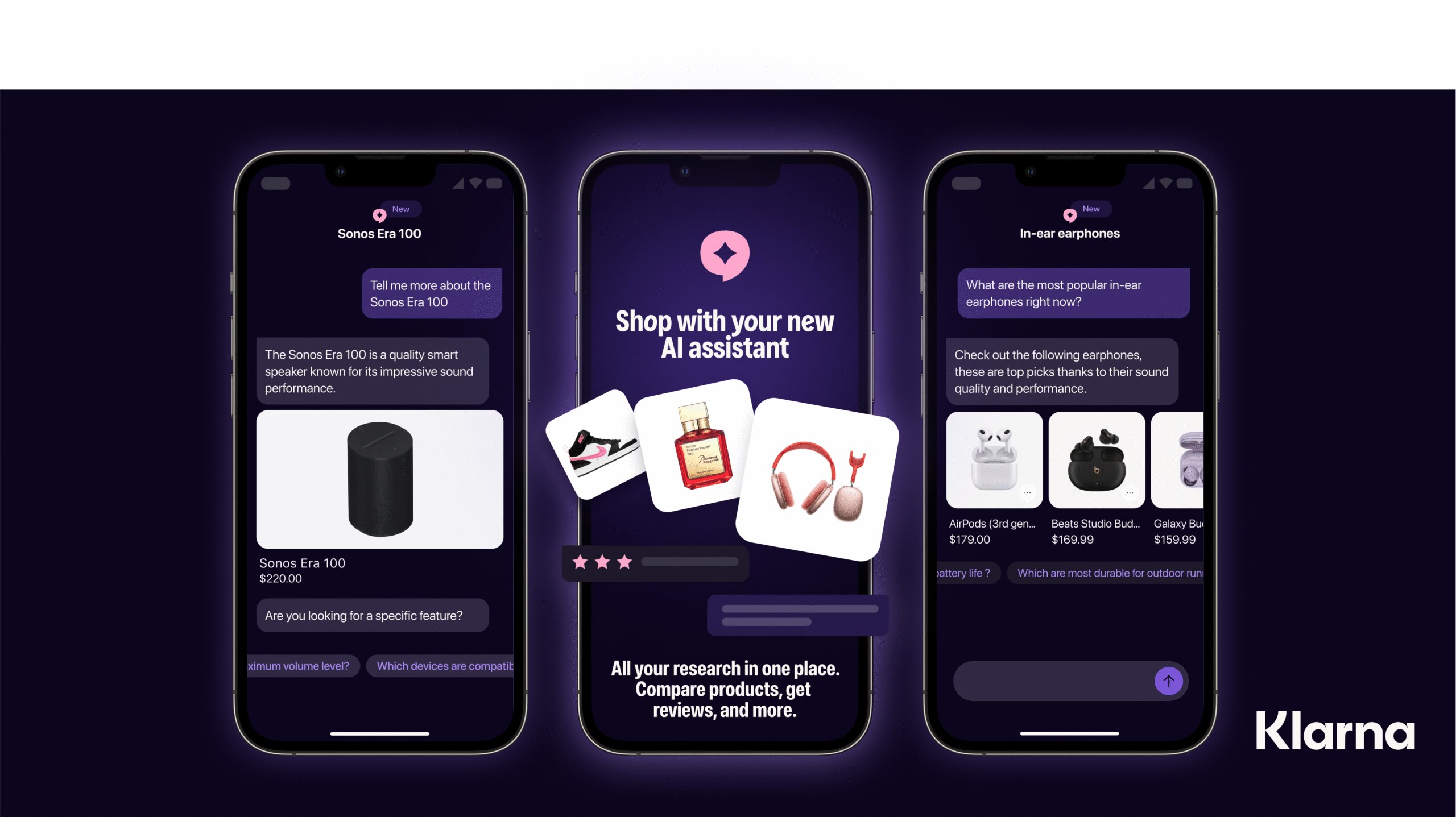

Case study #1: Shopping in Natural Language With Klarna

Klarna, the buy-now-pay-later platform, deployed an AI agent that handles product discovery, comparison, and purchases on behalf of consumers. Users describe what they want in natural language; the agent shops and compares. Within months, the AI agent was handling a significant share of customer interactions—essentially becoming Klarna’s primary salesperson. For healthcare brands, this signals an urgent need: your product positioning and safety claims must be structured so AI agents can accurately advocate for your brand to human buyers.

Case study #2: ChatGPT Health

Announced in January 2026, ChatGPT Health is a new, dedicated health experience within ChatGPT that lets consumers securely connect medical records and wellness apps (eg., Apple Health, MyFitnessPal) so the model can interpret lab results, surface trends, prepare patients for visits, and support everyday health decisions without providing diagnosis or treatment, signaling a broader shift toward AI-augmented, longitudinal patient engagement outside the clinic. Built as an isolated health space with separate memories, enhanced encryption, MFA support, and strict app-level data minimization and review, it reflects rising regulatory and public expectations for privacy-by-design in digital health tools. The product is being rolled out initially to a limited user group. Clinician collaboration is central: more than 260 physicians across 60 countries helped shape “HealthBench,” a clinical evaluation framework that emphasizes safety, clarity, and appropriate escalation, embedding clinical-style quality controls directly into the model’s behavior. For healthcare agencies, this points to an emerging trend where the general-purpose AI becomes a governed, physician-informed patient companion that operates alongside—not instead of—traditional care, potentially influencing expectations for how systems present results, educate patients, and triage follow-up needs.